The quiet progress in keeping quantum bits from falling apart

I was debugging some finicky old code late one night a few years back when it struck me how much modern computing still comes down to getting the basics right—error handling, redundancy, making sure one bad bit doesn’t collapse the whole system. That memory surfaced again as I’ve tracked the latest in quantum computing. For decades the promise of these machines solving problems classical computers couldn’t touch has been stalled by the same stubborn problem: qubits are fragile. A cosmic ray, a slight temperature shift, or just time passing can flip a quantum state and wreck a calculation. The answer has long been quantum error correction, spreading the information of one stable logical qubit across many physical ones. But making it work reliably at scale has been the real challenge.

The long road to logical qubits

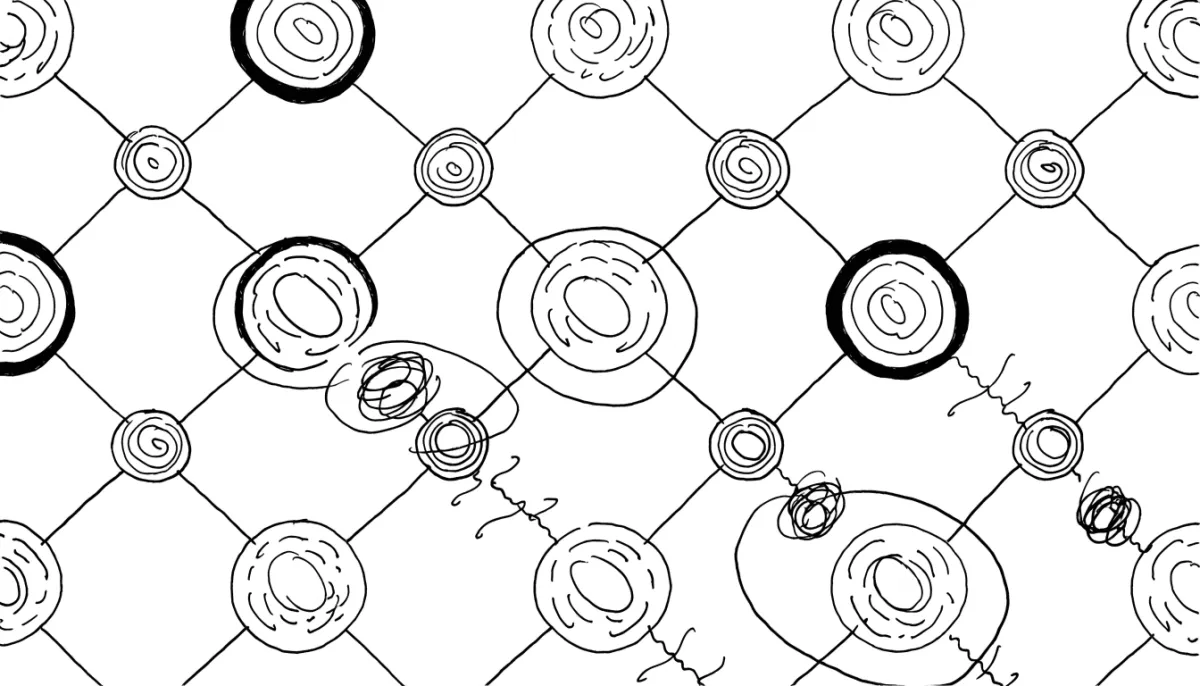

The theory of quantum error correction isn’t new. One leading approach, the surface code, dates back to the late nineties. The concept is direct: distribute a single logical qubit’s information over a grid of physical qubits, then repeatedly measure syndromes that flag errors without collapsing the quantum data. Fix the errors in real time, and larger grids should yield more reliable logical qubits. But for years the gap between theory and hardware stayed wide. Physical error rates ran too high, and scaling the grid often amplified problems rather than solving them.

Things shifted in late 2024 with Google’s Willow processor. On a 105-qubit chip they showed exponential suppression of errors below the surface-code threshold, something the field had pursued for nearly thirty years. Scaling the code distance from a 3x3 lattice to 5x5 and then to a 7x7 using 101 qubits total, the logical error rate improved by a factor of roughly 2.14 each step. At distance-7 the logical memory hit an error rate of about 0.143 percent per cycle, outperforming the best physical qubits on the chip by a factor of 2.4. The logical qubit simply lasted more than twice as long. They managed real-time decoding too, with latencies between 50 and 100 microseconds.

Beyond Google: IBM’s methodical approach

Google isn’t the only one moving the needle. IBM has laid out a clear roadmap that could yield tangible steps forward soon. They’re preparing processors like the Kookaburra module, built around quantum low-density parity check codes—qLDPC, sometimes called bivariate bicycle codes—that could protect data with far fewer physical qubits, maybe a tenfold efficiency gain over surface codes. IBM aims to demonstrate a scientific quantum advantage by the end of next year, including a fault-tolerant module. Looking further, they talk about a full-scale fault-tolerant system by 2029 that could run circuits with 100 million gates across 200 logical qubits.

These codes, paired with improved decoding methods like IBM’s Relay-BP that runs efficiently on hardware, sketch a practical engineering route. Other groups are trying different paths: QuEra with neutral-atom systems, and startups like Alice & Bob with long-lived cat qubits that might lighten the error-correction load from the start.

What changes beyond the lab

This matters outside physics labs because fault-tolerant quantum computing could open new doors in drug discovery by accurately simulating complex molecules, in materials science for better batteries or superconductors, in cryptography that will need post-quantum alternatives, and in tough optimization problems for logistics or finance. We’re not talking about consumer devices anytime soon. The machines will probably first serve as specialized accelerators alongside classical supercomputers, handling the hardest pieces of larger problems.

Next year feels like a possible turning point, when several roadmaps converge on demonstrations that move past lab milestones into clearer building blocks for fault tolerance. Of course the obstacles are still enormous: scaling to thousands or millions of physical qubits without new error correlations, tight integration with classical control systems, and bringing costs into some realistic range.

There’s hype to temper, too. Quantum stocks swing wildly and timelines have slipped before. But watching error rates fall exponentially instead of in small linear steps marks a real shift in the conversation, from whether correction can work to how quickly we can put it to use.

Looking ahead

I’ve seen enough technology cycles to understand that moments like these seldom arrive with dramatic fanfare or instant transformation. They tend to reshape what’s possible gradually, the way early internet protocols or solid-state drives quietly enabled the tools we now take for granted. The true test will be whether those exponential gains hold as systems grow larger and more entangled.

For the moment it feels quietly hopeful to watch the physics and engineering finally line up. Next year won’t deliver fully fault-tolerant quantum supercomputers ready for every problem, but it may be when we stop asking if error correction can succeed and begin exploring what we might actually build with it.